AI-generated fake image of Pentagon explosion goes viral on Twitter

The Incident: A Fake Image Goes Viral

On the morning of May 22, an AI-generated image depicting a violent explosion at the Pentagon in Washington, D.C., went viral on Twitter, causing widespread concern and confusion. This incident highlights significant vulnerabilities in Twitter's verification system, especially after Elon Musk's introduction of the paid Twitter Blue verification service.

Rapid Spread and Official Denouncement

The fake image quickly gained traction online, spreading rapidly after being posted. It wasn't until U.S. government officials intervened, denouncing the image as a hoax, that the viral spread began to slow. The image had been amplified by accounts with blue check marks, including Russian state media and a fake Bloomberg News account, adding to its perceived credibility.

Details of the Viral Post

Around 10 a.m. ET, a photo showing a blazing lawn near the Pentagon was posted with the caption, "Large Explosion near The Pentagon Complex in Washington D.C. - Initial Report." The image rapidly circulated, causing panic and confusion.

Forbes reported that a spokesperson from the U.S. Department of Defense labeled the photo as "misinformation." By 10:27 a.m. ET, Arlington Fire and EMS had also denounced the image on Twitter, stating that no explosions had occurred near the Pentagon that would pose any danger to the public.

Expert Analysis and Verification

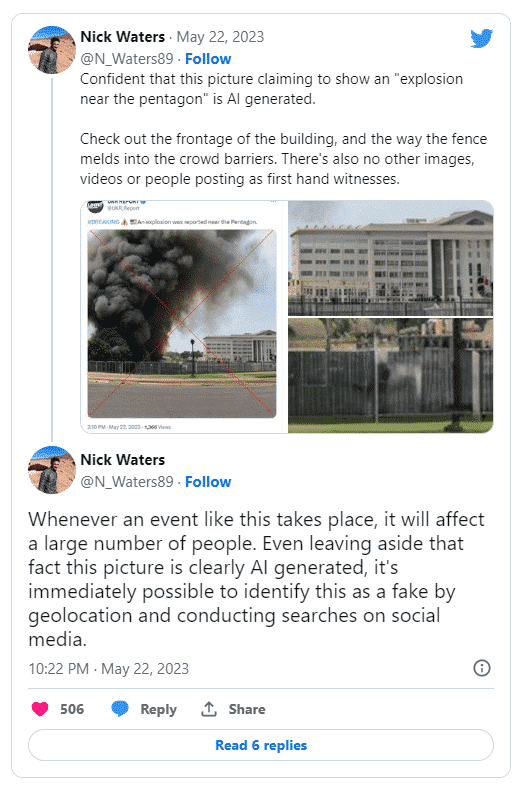

OSINT (Open Source Intelligence) experts were quick to analyze the image, identifying inconsistencies that confirmed it was AI-generated. Nick Walters, a digital investigator at Bellingcat, pointed out specific discrepancies in the image, such as the unrealistic blending of the fence into the crowd barrier, which indicated it was fake. He emphasized that such a significant event would be supported by multiple first-hand eyewitness photos or videos online, which were notably absent.

The Broader Impact of AI-Generated Fake Images

While AI-generated images can be entertaining, they pose serious risks, particularly in spreading misinformation. Since the release of ChatGPT in November, the potential for AI-generated fake news has been a growing concern among governing bodies and AI experts.

In a related incident earlier in May, a man in China was arrested for using deepfake technology to create a false news video of a train collision. This video, shared widely on social media, highlights the dangers of AI in generating and spreading fake news.

The Verification System Under Scrutiny

The Pentagon image incident has brought attention to the flaws in Twitter's verification system. Andy Campbell, senior editor at The Huffington Post, discussed the challenges Twitter CEO Elon Musk faced with Twitter Blue verification. Previously, blue check marks were granted to verified accounts of legitimate organizations and individuals. However, under the new system, anyone willing to pay $8 a month can obtain a blue check mark, undermining the credibility of the verification process.

In March, the controversy peaked when many celebrities and journalists, previously verified for free, refused to pay for the service. This refusal led to the removal of their blue check marks, further complicating the verification landscape.

Campbell highlighted the dangers of this pay-for-verification system. An account with a blue check mark can easily appear legitimate, even if it tweets AI-generated fake news, as was the case with the Pentagon explosion image. The fake Bloomberg News account responsible for spreading the image has since been deactivated, but the origin of the fake Pentagon image remains unknown.

Economic Consequences

Despite the rapid debunking of the fake image, its brief virality had significant repercussions. The rumor caused a noticeable drop in the New York Stock Exchange on Monday, demonstrating the potential economic impact of such misinformation.

Conclusion

The viral spread of the AI-generated Pentagon explosion image underscores the urgent need for more robust verification systems on social media platforms. As AI technology continues to advance, the potential for generating and spreading misinformation grows, posing significant challenges for digital security and public trust. Enhanced measures are essential to ensure the credibility and reliability of information shared online, particularly in the context of critical events and news.